Any information / updates : glotin@univ-tln.fr

Data and submission of results through Kaggle.com

Avian Data

Whale Data

The field of bioacoustics faces similar challenges to those defined in the general machine learning and advanced signal processing communities. However, in the former case, the unknown extent of signal feature variability, unknown informational significance of features and limited knowledge of the signal's semantics may hinder the robustness of existing machine learning algorithms as a result of highly tuning and adapting these methodologies for the different collections of data.

As previously mentioned, bioacoustics aims at analyzing and modeling animal sounds for biodiversity assessment. However, given the large amount of the collected data along with the different taxonomies of the different species and their environmental contexts, it would be intractable to attempt to analyze each of the different sounds or even to offer general solutions that would be applicable across different species.

The goal of the technical session is to provide possible solutions for targeted applications that have been popular in the field, but remain either partially solved or require significant manual interaction. Moreover, we hope that by building a representative, standardized collection of validated acoustic data we will provide a much needed comparative framework within the bioacoustics community.

In the hopes that the proposed workshop could become a repeated event, the desire would be for any subsequent technical challenges to focus on increasingly complex acoustic phenomena representative of a particular set of signal types which generally occupy a high-dimensional, acoustic feature space: for example, short duration, broadband transients; or hierarchically organized combinations of stereotypic, frequency-modulated sounds, syllables, and phrases repeated in long bouts; or hierarchically organized combinations of highly variable, two-voiced, frequency-amplitude-modulated sounds, repeated in long bouts. Further complexity could include acoustic scenes with multiple, higher variable sources or data from coherent, multi-sensor systems. These would provide a diverse set of algorithms to allow parallel or cross-comparison classification schemes and would aid in elucidating how different algorithms perform on different levels of acoustic complexity. The expected outcome would be the significant advancement of our abilities to explore and understand different classes of bioacoustics data within natural context, and across the broad spectrum of biodiversity.

Technical Challenge Data

The automatic analysis of marine mammal sounds has long been an interest in the bioacoustics community given the sounds' intrinsic complexities, the underlying concerns for these over-exploited species, and the fact that in the U.S. there are explicit statutes protecting marine mammals. The same level of interest easily applies to avian songs and calls, which are easily experienced, aesthetically pleasing, and have served as the basis for a rich and productive history in fundamental research on vocal ontogeny, memory, and computational neuroscience.

For that reason we propose that the first Technical Challenge is focused on sounds of marine mammals and birds.

A training, development and test set will be provided for each of the data sets. In both the avian and marine mammal sets. The development sets will be comprised of artificial mixtures offering a controlled environment for system creation. On the other hand, the test sets will include real life mixtures, thus exposing the created systems to the variability and diversity of bioacoustic recordings.

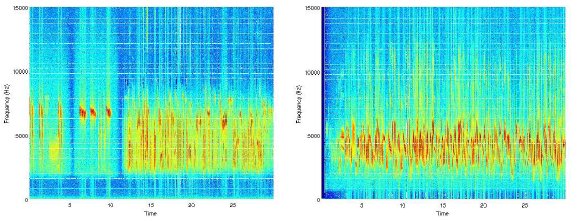

Sample examples of the avian data set are shown in the images below.

| Data | Avian (from MNHN Paris) | Marine mammals (from Cornell Univ) |

|---|---|---|

| Description | TRAIN: Clean recordings from the MNHN soud library1 TEST: Recordings form the Regional Park of the Upper Chevreuse Valley in France, daily for 30min prior to sunrise. |

TRAIN: Recordings of single species (one call per hour), Right Whale (3 types of calls) TEST: Recordings in the wild from the Stellwagen Bank National Marine Sanctuary |

| Duration | TRAIN: 30 sec x 35 bird recordings = 18min TEST: 150sec x 3 mics x 90 recordings = 11.2 hours |

TRAIN: approximately 48 hours x 1 species TEST: approximately 96 hours |

| Equipment | TRAIN: 1 microphone, 16bit, 44.1kHz TEST: 3 microphones on trees in the same area, into different forest state (mature, young, open), 16bit, 44.1kHz2 Audio format: .wav |

Hydrophones, 16 bit, 2-10kHz MARU, bit depth = 11, Sensitivity -167.5 dB re: 1uPa/V Audio format: .aif |

| SNR | TRAIN: 20-60 dB TEST: 5-9 dB |

TRAIN: 1-20 dB TEST: 1-20 dB |

| Ground truth | Sample level: Call/no call Call level: Species class |

Sample level: Call/no call, frequency start/end Call level: Species class (by spectrogram expert analysis and/or by on the field visual identification) |

| Features | Raw audio Mel Cepstral coefficients are given |

Raw audio |

| Meta-data | TRAIN / TEST : The phylogenetic tree (usual format, including R package to read/manipulate) of the target species Location, Time, Temperature, locationMeteo |

Location (longitude, latitude), Time, Depth, ambient sound level |

1

The data for this challenge are copyright of Fernand Deroussen Jerome Sueur of the Musee National d Histoire Naturelle, their usage is restricted to this challenge. The competition test data was graciously provided by Jerome Sueur.

More details on the train data are given in : Deroussen, F., 2001. Oiseaux des jardins de France. Nashvert Production, Charenton, France ; Deroussen, F., Jiguet, F., 2006. La sonotheque du Museum: Oiseaux de France, les passereaux. Nashvert production, Charenton, France ; http://naturophonia.fr.

naturophonia.fr

2Depraetere M, Pavoine S, Jiguet F, Gasc A, Duvail S, Sueur, J - Monitoring animal diversity using acoustic indices: Implementation in a temperate woodland. Ecological Indicators, 13: 46-54

The ground truth of each test set will be used to score each system and distributed after the deadline for your working notes. * The participants must not try to handlabel the test set for tuning their models.

Guidelines on submitting results are simple. Please follow the guidelines on KAGGLE as shown in the links below:

Avian Data

Whale Data

Tasks

As part of the challenge, different thematic approaches can be proposed that will reflect on the most commonly encountered problems in the field. In both cases, avian and marine mammal sounds, the major thematic approaches include:

- (i) Species detection

- (ii) Species classification

- (iii) Call extraction

- (iv) Tracking/Localization

In this proposal we recommend a technical challenge in the area of automatic classification as it appears to be a task that will immensely benefit the bioacoustics community. Our proposed challenge is outlined below:

- Task 1: Species Recognition/Clustering

- The goal of the task is to recognize the avian and marine mammal species in their respective recordings. This is a multi-class problem and allows participants to explore both supervised and unsupervised methodologies. Emphasis will be given on the recognition rate rather than the computational cost of the methodologies.

- Task 2: Free challenge

- Using the bird data set we can offer participants the chance to provide any meaningful environmental/ecological information using machine learning tools. The goal is to extract possible meaningful ecological correlations between the avian recordings and the provided meta-data (phylogenetic data which could match some acoustic cues, meteo (wind, sun...) for each test set.

Data samples are given below: